Solutions

The Pioneering of DFT

Integrating DFT into the Diagnostic Engineering Discipline

The Pioneering of DFT

Testability, as a concept, was first created in 1964 based on a concept formulated by Ralph A. De Paul Jr. during the prior decade, then formally authored by William Keiner, certified by the U.S Congress, and published as MIL-STD-2165 in 1986. The concept of “Fault-Isolation System Test-ability” as initially described in 1965 by Mr. DePaul, evolved into simply, “System Testability”. This was before acronyms such as Designing-for-Testability (DFT), Design-for-Test (DfT), or Design-to-Test (DTT) were established to later describe specific segmented activities within the fully intended scope of designing for testability. The objective was to influence the design so that it could be used for testing, any and all testing, and concurrently to influence the design for effective sustainment – or as DSI terms it, Design-For-Sustainment (DFS).

Designing for test, testability, sustainment, etc. must be a multiple disciplinary-inclusive process without design domain barriers. The activity must coexist as an efficient and exhaustive approach that always has an eye on the sustainment objectives and thereby contributes to the forming of a byproduct Diagnostic Design Knowledgebase. However, gaining any true knowledgebase requires that data artifacts retrieved from the integration of relevant, disciplinary-owed data would need to be formally integrated, which is beyond the simple sharing of data (or data “interoperability”).

As the original objective of Design For Testability was intended to be a development for the sustainment lifecycle – but performed during the design development lifecycle, any DFT activity would require the gathering, restructuring and organization of the DFT data. Of course, this would include any and all of the designs comprising the system, however it is defined, and consider how each design is interrelated to any other design(s) within a fielded product—a fully integrated system.

The first challenge with such a “Design For Testability” endeavor would be to somehow show that the folks controlling the budgets in Design Development are also realizing the financial benefits that are so naturally and gainfully realized in the sustainment lifecycle – since the bulk of the process improvement is primarily “prepped”, performed and funded in the Design Development lifecycle(s). Although the “technology challenge” of attaining such a high level of effective diagnostic competency has been ascertained, the companion, “budgetary challenge” (design vs. logistical support), would not be so broadly available until just the past few years. To achieve this challenge, the “Design Development” activity would require its own independent “ROI”, separate from the support “ROI”, as a stipulation before instituting such a process that more naturally, greatly benefits the Sustainment activities.

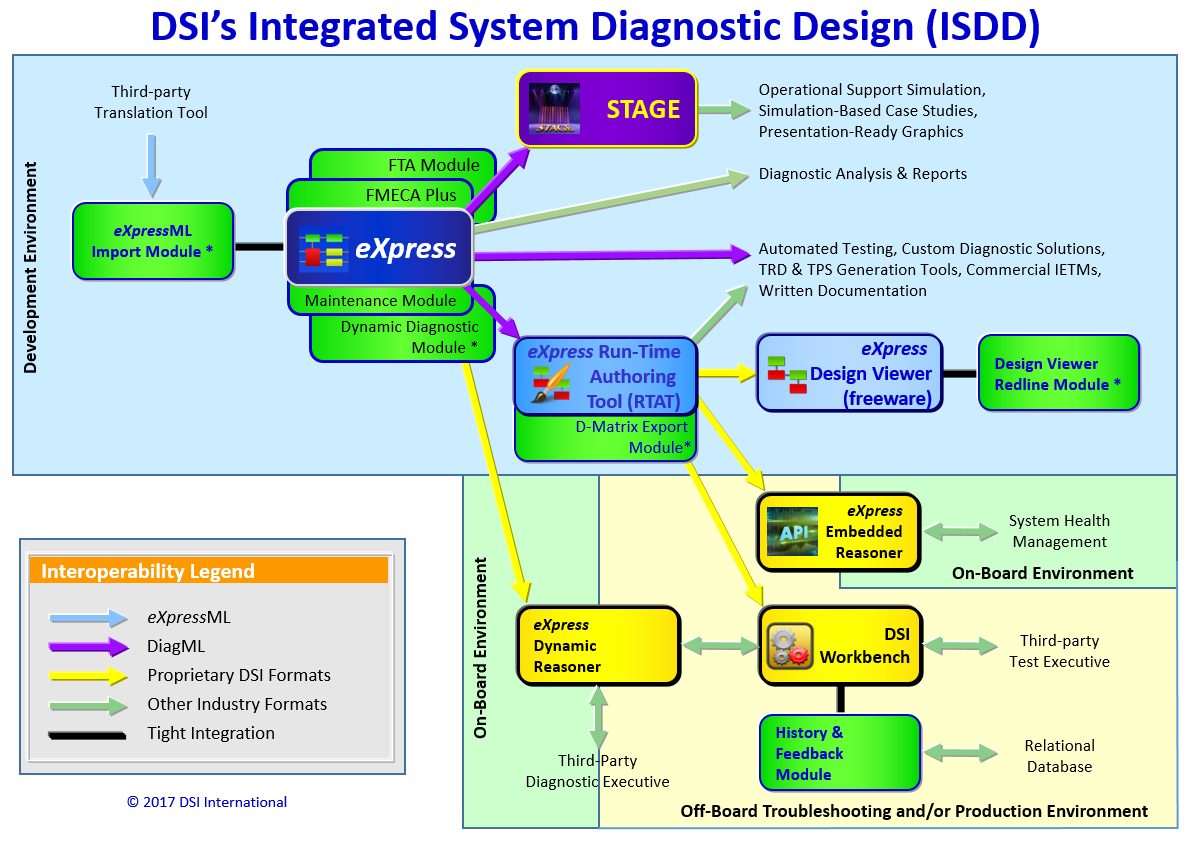

As a result of taking full advantage of DSI’s ISDD, DFT is a significantly profitable endeavor in both the Design Development and the Sustainment Lifecycles!

DFT Must Be Conscious of Other Design

When focused on any independent design, DFT is important and can be extremely valuable. But for an interdependent design, the awareness of the entire functional scope of all designs that may have been developed by other design teams or organizations, is equally imperative consideration as design complexity and intended operational sophistication increases. As independently developed designs are integrated into larger designs, it is often the case that assemblies, subsystems or the fielded product, cannot conclusively rely on the “selective” DFT activities performed exclusively for each independent lower-level designs. Therefore, it is too often discovered that the independently-applied DFT to any exclusive design piece is no longer equally applicable to its manufacturing value, when realized from the “fielded product” and supportability perspective. Unless the (functional and failure) interrelationships for each independent design are established in an integrated systems’ diagnostic architecture, the utility of DFT for improving the operational availability of the fielded product is subject to being inadvertently subverted.

When the capturing of the functional and failure interdependencies between each design entity, regardless of design domain (electronic, hydraulic, mechanical, optical or software, etc.), is performed, then the DFT is significantly more effective. As such, the holistic integrated-systems’ DFT, is now capable of determining corresponding (operational) Fault Groups for the fielded product in accordance with any (operational) “integrated” diagnostic constraints. It then follows that the transitioning to designing for sustainment diagnostic design may begin.

Test Coverage and “Interference”

In a diagnostic engineering analysis, there are several critical issues to be considered. Probably the most critical among them is “Test Coverage”. Test Coverage describes which components in the design are being included in a particular diagnostic test. Diagnostic testing refers to a process, similar to measurement testing, where the analysis is able to determine whether a component can be indicted or exonerated. Consequently, the accumulation of diagnostic evaluations lead to a “Diagnostic Conclusion” or hypothesis.

As comprehensive as that approach sounds, it is impure. Quite often, the diagnostic analysis can be influenced by a concept known as Interference”. Interference is a systemic characteristic of most every complex design, where unexpected components, or their functions—upstream, downstream, or tangential to the defined diagnostic test coverage—are able to impact the diagnostic hypothesis. The only way that this kind of diagnostic Interference can be discovered is by the assessing of the diagnostic integrity of that design within an advanced diagnostic engineering endeavor. This is a core competency of the eXpress diagnostic engineering application that excels in this area, which is the primary reason why eXpress is sought to deliver on the diagnostic demands of the largest or most complex systems designed today.

As a result of this rich coverage analysis capability, the diagnostic interpretation of a testability analysis becomes quite extensive and accurate, regardless of whether the captured eXpress design is a small, or when assed interdependently other designs or subsystems in a much larger capacity. As a consequence of this higher level of analysis, disparities in Built-In-Test (BIT) test coverage may be found to be deficient in the operational state in a deployed environment. For this reason, it then becomes possible to unlock more associated treasures when performing of DFT, DfT and DTT in the test environment. Ultimately, this is a critical step in the transitioning to an effective diagnostic acumen that can be immediately deployed along with the certainty of fielding an accurate, consistent and reliable sustainment support strategy.

Establishing a Diagnostic Design “Knowledgebase”

When interdisciplinary design data is able to be collected, (re)structured and (re)organized in a consistent and deliberate manner that enables the establishing of the functional or failure interdependencies and interrelationships within any complex system, a “Diagnostic Design Knowledgebase” is able to be formed. In the using of the eXpress diagnostic engineering tool, several critical steps are accomplished that lead to the establishing of a Diagnostic Design Knowledgebase.

The first step is the creation of a model that establishes the functional interrelationships between objects or very high-level functional systems, if performed early in design development. A more complex system’s model will be framed by the use of empty functional models until more detail is determined or available during development, allowing high-level feedback to influence diagnostic design to meet the objectives of alternative sustainment approaches.

As the design development progresses, the functional interrelationships can be synced up with other “integrated designs”. In a larger sense this is the capturing of the functional or failure interrelationships within each “subsystem” and any lower-level design(s) and their interdependent designs within any given hierarchical “integrated system” structure. This design activity takes advantage, and “leverages” the producing of a Dependency Analysis, or the way that components’ test points, interconnections, and other models are interrelated throughout the integrated systems hierarchy. As mentioned above, diagnostic tests are incorporated to take advantage of the coverage revealed during modeling preparations. Finally, various Diagnostic Analysis Studies can comprise all the modeling information together to assess and improve as an “integrated” capability.

From that point forward, the appropriate Test Coverage for each point of test, observation or location of health status interrogation (BIT sensors, ATE, etc.), as based upon such diagnostic analyses, will all be brought together to provide an “agile” Diagnostic Design Knowledgebase. This knowledgebase will represent the captured expert diagnostic design in such a form that it can be shared, assessed, modified, validated and augmented with other design or support activities or partnering organizations. Obviously, capturing and validating the test coverage for complex systems is an inherent, and unique, advantage of using eXpress.

DFT for Semiconductor Industry

Design for Test as used by professionals in the semiconductor industry, have an acutely specialized understanding of DFT. As such, this activity concerns itself with digital designs using techniques as ABIST, AMBIST, ATPG, Boundary Scan, BSC, BSR, CBIST, FASTSCAN, IBIST, JTAG, Logic Test, LOGICBIST, MBIST, Memory Test, Mixed-Signal Test, etc. While these are, today, established techniques and have significant merit at that specific piece of DFT, the investment into this flavor of DFT has not been generating enough attention as a valuable or reusable asset for the systems’ integrator for larger “integrated” products (trains, military vehicles, missile systems, airplanes, etc.). This will continue to be a challenge for the DFT community in this sector until it reaches out to integrate with methods or tools that can leverage this investment with, and across, other designs and design domains in a seamless transition, and become effective in supporting any evolving sustainment paradigm(s).

DFT and its Concept Of System

The use of system is not universally understood to be narrowly specific, so it would be otherwise advantageous to embrace the broadest understanding of the term, “system”. It therefore becomes critical, regardless of whatever DFT, DfT or DTT activities are embraced, that the investment made should lead to a meaningful interconnectivity into other interdependent design domains, activities throughout the sustainment lifecycle. Consequently, such expanded program value need to address benefits to the servicing and balancing of four (4) significant goals of testability:

1. Availability of Equipment or Systems

2. Operational or Mission Success, or Reliability

3. Operational Safety

4. Cost of Ownership

The various “illities” (e.g., testability, reliability, maintainability, sustainability, etc.) aspire to perform co-related activities as an effort to integrate a system’s ability to be developed and supported effectively. But in the servicing of this objective, these disciplines typically behave as competing activities that, unfortunately, aspire to achieve a greater priority, and thereby, greater influence on program budget.

DFT as a Design Influence Activity

To bring everything together, it is fair to assert that the objective of designing for sustainment includes the interdependence on all of the design and support activities as noted above. Therefore, the real focus in diagnostic engineering should be to influence the design activity whenever and however possible. It should be inclusive of all design disciplines and not restricted to any specific design domain (e.g. digital). The most optimal dividend of such a solution would be to establish a test tool independent capability, which was the originally intended scope of the full systems testability discipline. Consequently, these are just some of the initial benefits of embracing a much broader vision by today’s DFT community. Fortunately, it is encouraging that some 50 years after its introduction to industry, industry is finally learning to appreciate that Design For Testability really needs to be more broadly encouraged as an essential design influence activity.

DFT to “Set the Table”

Ultimately, DFT needs to be carried out of the production lab environment and integrated into the sustainment environment, but in a way that is directly reused and repurposed from within its integration within the expert captured (diagnostic design) knowledgebase. This must not be design-domain limited, nor exclusive to any independent DFT tools, methods or interpretation of any DFT requirements. DFT can then “Set the Table” for much more comprehensive diagnostic tools to leverage its low-level “Test Coverage” capability across domains, design hierarchies, partnering organizations and a myriad of current and evolving sustainment methods and paradigms.

With ISDD, investment into DFT can be much more broadly (re)used in a Design for Sustainment (DFS) paradigm.

The role of DFT in the process described in the image above, is to provide an output that describes the test coverage of the functions on any of the designs. This “test” data can be either imported and captured in the eXpress diagnostic modeling tool environment. From this point, the diagnostic capability can be fully validated at any point used by the diagnostics as required by the sustainment philosophy of the fielded or operational asset.

Related Links:

Diagnostic Assessment of BIT and Sensors

Analysis of Diagnostic Impact Upon False Alarms<

Optimize Sensor Placement to Reduce False Alarms

DFT is So Beautiful

Boundary Scan modeling in eXpress

Related Videos:

Standards-Powered, COTS-Based Solution for Through-Life Support

Diagnostic Validation