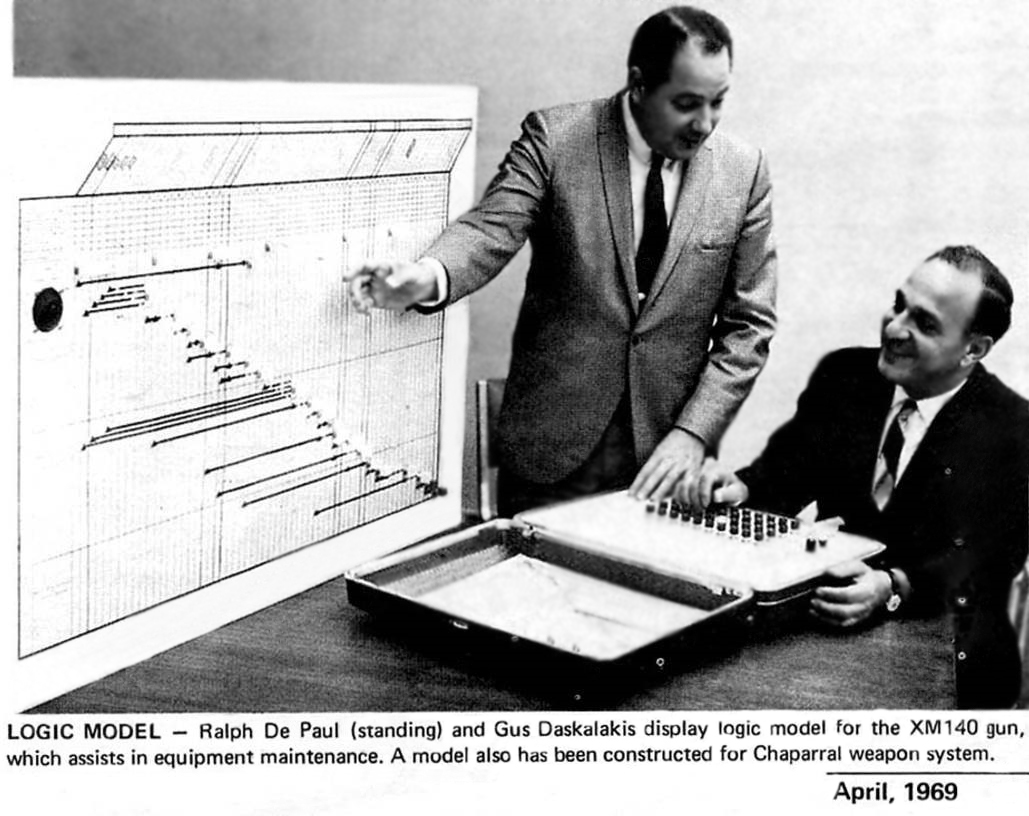

DSI was founded in 1975 by Ralph De Paul, Jr. with a single vision–to create a new approach to diagnostics. He began the quest to improve the operational readiness of equipment following his real life experiences from serving in the war. Today, our eXpress software provides a modern approach to Ralph’s original vision. Shown to the left is a picture as Ralph demonstrates his new Diagnostic Modeling Approach, prior to his founding of DSI, based on his innovative application of Dependency Modeling to the world of Diagnostics.

As one might say, “the rest is history…”

A Short History of Diagnostic Modeling Part I: The Early Years

In the beginning, there was the dependency model-a way of representing the causal relationships between events and the various agents that enable those events to occur. Dependency modeling was initially developed by Ralph A. De Paul, Jr. in the 1950s as a method of developing more “responsible” diagnostics after several of De Paul’s friends were killed in the Korean War when their equipment malfunctioned in ways that had not been anticipated by diagnostic developers. Logic Modeling (as De Paul began calling the functional dependency modeling process in the 1960s) allowed all functions of a device or system under test to be mapped to the events, testable or not, that depend upon the proper operation of those functions.

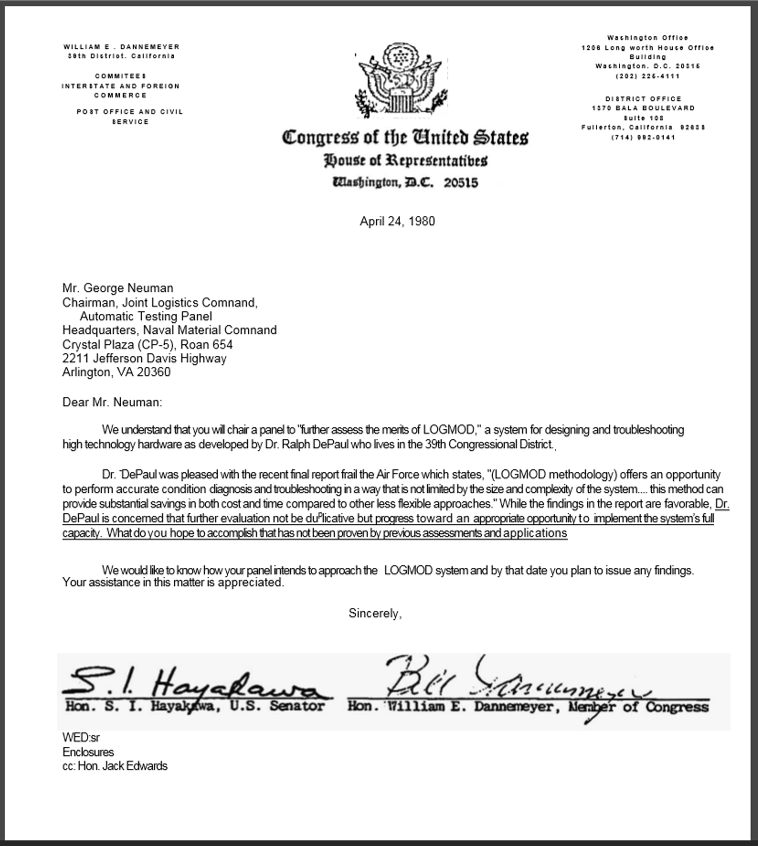

In 1974, De Paul’s dependency modeling concept was incorporated into MIL-M-24100B, a military manual that documented the construction of the maintenance dependency chart (the central task of the Logic Modeling process) as part of the development of Functionally Oriented Maintenance Manuals (FOMM), Also in the 1970s, De Paul founded DETEX Systems, Inc. (now DSI International) and offered his Logic Modeling process-newly hosted as the computer program LOGMOD-as a commercial product for the DoD diagnostics community. After a string of LOGMOD successes in the 1970s and 1980s, other companies began to offer their own dependency model-based approaches to diagnostic development and assessment, often tweaking the dependency modeling process to serve their own particular bias or niche. As more members of the diagnostic community jumped on the dependency modeling bandwagon, there was soon a general divergence in modeling approaches. One camp-whose proponents envisioned dependency modeling as the missing link between FMECA/Reliability Block Diagrams and run-time diagnostics-adapted De Paul’s original dependency modeling technique to map specific failure modes (rather than functions) to tests. Rather than model a system or device the way that it is supposed to operate, this approach advocated modeling the system or device as it is expected to fail. Although this “twist” on dependency modeling may have been more enticing to run-time diagnostic developers, it suffered from two major disadvantages:

1.incompleteness-the effectiveness of the resulting diagnostics was restricted by the modeler’s ability (or inability) to enumerate all of the ways in which the unit under test was capable of failing, and

2.limited usefulness-because failure-oriented dependency models require that the specific failure modes of a design or system be identified, models can’t be developed until implementation details become available (relatively late in the design process), thereby limiting their applicability as a engineering tool during product development.

Meanwhile, De Paul and others continued preaching (and demonstrating) the value of functional dependency modeling. Although, at the time, functional diagnostics were not always an easy sell” to failure-oriented diagnostic developers, De Paul’s functional approach to diagnostic modeling nevertheless promised that rigorous analysis would result in 100% reliable diagnostics (a guarantee that could not be matched by modeling approaches that focused on failure modes), thereby remaining true to De Paul’s original vision of diagnostic “responsibility.’ In the late 1970s De Paul began offering LOGMOD not only as a tool for the development of fault isolation logic, but also as an aide for testability and maintainability design-a move that would greatly influence the direction of both his own company (DSI) and the diagnostic modeling community in general. Recognizing that the functional approach of his Logic Modeling process was uniquely suitable for providing feedback about a design’s diagnostic capability during early stages of the development cycle (when implementation specifics are not yet available), De Paul pioneered the field of model-based testability analysis, adding figures of merit to the LOGMOD analysis reports that would later become standard measures of design testability (in fact, in the early 1980s, De Paul worked closely with the writers of MIL-STD-2165–the first military standard on testability analysis).

A Short History of Diagnostic Modeling Part II: The Second Generation

In the mid 1980s, DSI was a member of the team that developed the Weapon System Testability Analyzer (WSTA) portion of the U.S. Navy’s Integrated Diagnostic Support System (IDSS). Although De Paul’s company was instrumental during the conceptual planning and pitching of WSTA to the Navy, DSI was only allotted a relatively small portion of the actual implementation of the tool. Because the resulting program was such a far cry from what it could have been (that is, from DSI’s original vision of the tool), DSI personnel to this day remember the WSTA years only with a certain amount of embarrassment. Sometimes, however, one must take a step backward before they can progress forward. In this case, De Paul’s dissatisfaction with the results of the WSTA project led directly to the development of STAT-DSI’s second-generation diagnostic engineering tool.

STAT began its life as a more robust, full-featured implementation of the Logic Modeling process. Whereas LOGMOD had only run on a limited number of older platforms, STAT was designed to run on a wide variety of systems, including not only those with established operating systems such as DOS, UNIX and the Macintosh OS, but also on systems that ran Microsoft’s new Windows software. The STAT user interface, which was more sophisticated than those in either the LOGMOD or WSTA programs, allowed traditional dependency models to be developed, reviewed, and modified with unprecedented ease.